As AI and real-time processing collide with the laws of physics, the centralized datacenter model is reaching its breaking point.

The centralized data center model had a good run. For most of the last decade, the Cloud meant a handful of hyperscale campuses in Tier 1 markets — Northern Virginia, Dublin, Singapore — where economy of scale was the only number that mattered. Build big, build central, let the fiber do the work.

That model is running out of road.

As agentic AI, autonomous systems, and industrial IoT move from pilot to production, the laws of physics are starting to win the argument against the laws of economics. The rush to the edge isn’t a trend. It’s a structural correction, driven by something no amount of capital expenditure can fix: the speed of light. Yes, literally, the speed of light.

The latency problem

A 100-millisecond round-trip delay was an acceptable annoyance in the era of email and web pages. In the world of AI inference at the edge, it’s a failure state.

You and I can tolerate a few seconds while ChatGPT thinks about flour varieties for our next bake. That’s fine. But when an autonomous vehicle needs a split-second decision from an AI model, the data can’t afford the transit tax of a 500-mile round trip to a core data center and back. A single AV generates up to 4 terabytes of data per day. Uploading even a fraction of that to a central cloud for real-time processing is technically impossible — and economically? Well, it’s bad.

IEEE research on haptic internet and tactile feedback systems puts the latency ceiling at 1 to 5 milliseconds. To hit that number, compute needs to sit within 10 to 50 miles of the end user. That’s the edge in its truest sense — not a regional hub, but neighbourhood-level compute.

Data gravity

As data volumes grow, they develop gravity. The more data an application generates at a specific location, the harder it becomes to move it anywhere else.

Historically, we moved data to compute. Today, we’re forced to move compute to data. Gartner projects that by 2026, 75% of enterprise-generated data will be created and processed outside a traditional centralised data center or cloud. If you’re a data center operator, this changes your value proposition. You’re no longer selling space and power — you’re selling proximity. The strategic advantage is shifting toward facilities that sit at the intersection of local fibre on-ramps and dense urban populations.

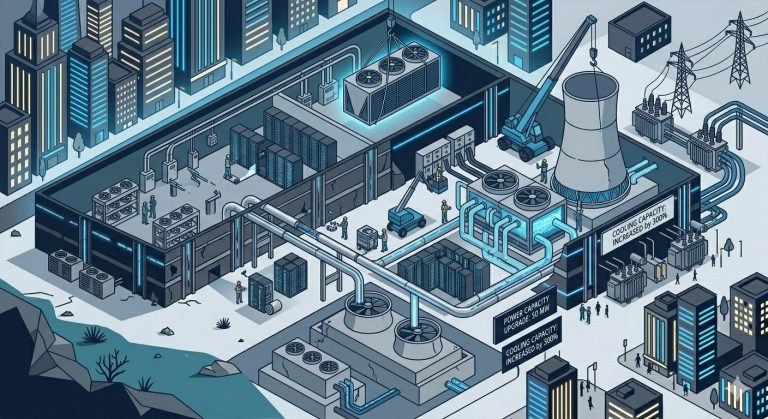

The engineering reality

Building at the edge means building in constrained environments — basements, repurposed urban industrial shells, spaces the size of a shipping container. These sites were never designed for the density requirements of modern AI.

Traditional retail colocation was built for 5kW to 10kW per rack. Today’s NVIDIA H100 and Blackwell clusters are pushing toward 40kW, 60kW, and well beyond 100kW per rack. You can’t solve that with forced-air cooling — there simply isn’t enough cubic volume to move that much heat. The shift to liquid cooling (direct-to-chip) and rear-door heat exchangers isn’t a roadmap item. At the edge, it’s table stakes.

The operational reality

In a Tier 1 market, you build for tenants. At the edge, you build for applications — and the economics are different. Managing one 100MW campus is a solved problem. Managing fifty 2MW sites spread across a tri-state area is something else entirely.

This is driving the rise of lights-out operations — facilities that are almost entirely autonomous, monitored by AI-driven DCIM tools that flag a failing fan or an impending power anomaly before either becomes a problem. It’s also pushing operators toward prefabricated modular deployments — factory-tested, dropped into place, standardised enough to maintain without a specialist on-site.

The LoadLine Perspective

The rush to the edge is a double-edged sword for the operator. On one side, it commands a real premium — urban space and power are genuinely scarce, and that scarcity has pricing power. On the other, the technical complexity can erode margins quickly if you’re not thinking clearly about it going in.

Our focus at LoadLineData tends to land in three places. Grid-first site selection — finding locations where mid-voltage power already exists, which sidesteps the three-to-five-year utility queue that kills most edge timelines before they start. Thermal readiness — helping operators transition from air-cooled legacy designs to liquid-ready infrastructure before the hyperscalers come knocking with their GPU clusters. And commercial translation — bridging the gap between a hyperscaler who wants edge proximity and an operator who still has a P&L to protect.

The data center revolution will not be televised. But it will be localised. The winners in this next phase will be those who stop thinking about the cloud as a destination and start treating it as a distributed utility — one that lives wherever the user happens to be.

.ml.

Sources

- Gartner: 75% of Enterprise Data at the Edge.

Gartner Identifies the Top Strategic Technology Trends for 2026 (and earlier 2025 infrastructure reports). - JLL (Jones Lang LaSalle):Data Center Market Expansion and Power Constraints.

JLL 2025 Global Data Center Outlook. Note: This report specifically highlights the decoupling of AI training (near power) and inference (near people). - IEEE:Tactile Internet and Latency Requirements.

IEEE 1918.1 “Tactile Internet” Standards Working Group. This provides the 1–10ms requirement for haptic/robotic feedback. - Intel / Network World:Autonomous Vehicle Data Generation (4TB per day).

One autonomous car will use 4,000 GB of data per day. Based on insights from former Intel CEO Brian Krzanich.