The data center industry spent the last three years building for AI training. Now comes the harder part.

For most of the last three years, the data center industry has been focused on one thing: training clusters. The massive, power-hungry facilities designed to ingest the internet and teach a model. Build big, find cheap land and cheap power — preferably somewhere nobody minds a 200MW substation, and let it run.

Training, for the most part, is an event. It happens, it finishes, and you move on. That made the infrastructure equation relatively straightforward.

Inference is different. Inference is the model actually doing something — answering a query, executing a task, making a decision. And we are now entering the inference phase of AI at scale. The infrastructure requirements that come with it are ones most of the industry isn’t fully prepared for.

From generative to agentic

The shift is happening right now. A shift from generative AI to agentic AI. A shift where few people seem to grasp its full implication.

Agentic AI reasons. It sequences. It decides what to do next without being told.

Generative AI, the kind most people are familiar with, produces outputs in response to inputs. Write this. Summarize that. Suggest some flour varieties for my next sourdough bake. These are predictive outcome models: capable, fast, and fundamentally reactive. They answer when asked.

To illustrate the difference: I use Gemini and ChatGPT to help write these articles. I find these ‘bots genuinely useful and I rely on them for organizing my thinking, doing research deep-dives, generating sample copy to react to, and producing the images that accompany each piece. But every step of the process still requires a decision from me. What topic? What angle? What do I want my audience to take away? Keep this paragraph or cut it? Publish now or later? The AI is a capable assistant, but I’m the one deciding what happens next, in what order, and why.

That’s generative AI. Agentic AI handles the whole sequence without me in the loop. Soup to nuts. Not because I asked it to — because it’s built to.

Gartner predicts that by the end of 2026, 40% of enterprise applications will feature multi-agent AI systems, up from less than 5% in 2025. That’s a significant jump in a short window. Personally, I’m actually quite excited about what that could mean for customer service. I look forward to no longer having to be frustrated by the experience of calling a customer support line staffed by people who barely know the product they’re there to support. I’m certain that AI agents will handle that task so much better, and soon. But the more consequential deployments will be in supply chain management: routing freight in real time, optimising deliveries, managing returns, adjusting dynamically to disruption. All of it running continuously, without a human trigger.

That word — ‘continously’ — is worth sitting with. Unlike a human-initiated chatbot, an AI agent doesn’t wait. It doesn’t sleep. It doesn’t have an off-peak window. It creates a baseline load on data center infrastructure that is fundamentally different from anything we’ve designed for before.

What that means for infrastructure

When you build for agentic AI, the design parameters change across the board.

The continuous duty cycle alone is a challenge. Agents require always-on compute, which puts real strain on secondary power systems and cooling loops that were traditionally designed with off-peak recovery baked in. Agents don’t offer recovery windows.

Then there’s inter-agent communication. Agentic systems frequently involve multiple agents talking to each other — what are known as multi-agent architectures. This generates enormous east-west traffic within the data center, making high-speed switching fabric (InfiniBand, high-speed Ethernet) significantly more critical than it’s been in a world designed around north-south traffic flows.

And then there’s latency. If an agent is managing a real-time trading floor or monitoring an electrical grid, the reasoning has to happen in milliseconds. The 100ms round-trip most of us have designed around doesn’t come close.

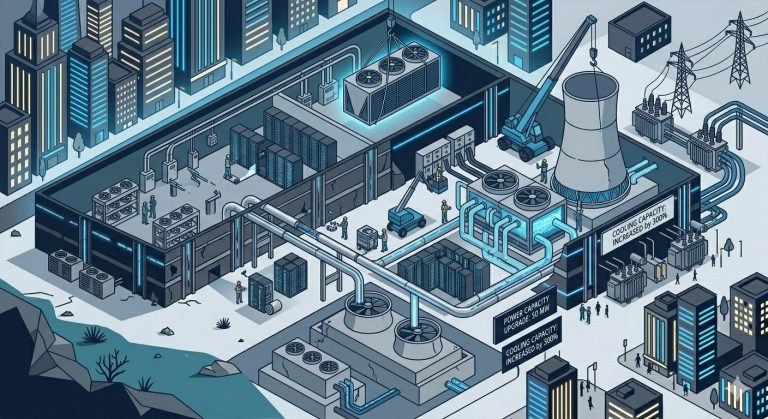

The thermal ceiling

The bottleneck for this shift isn’t only the silicon, or the optical switching, or the power supply. It’s the thermal envelope.

Most legacy data centers are air-cooled — designed to move enough air around a rack to dissipate 10kW. An inference cluster for agentic AI can push 60kW to 100kW per rack without breaking a sweat. You cannot solve that by moving more air. There simply isn’t enough cubic volume.

To be inference-ready, an operator needs to transition to direct-to-chip liquid cooling. It’s the only approach that handles the density requirements of H100 and Blackwell-class chips without an impractical expansion of physical floor space.

The LoadLine Perspective

At LoadLineData, we help operators distinguish between genuine agentic demand and hype-driven capital allocation — and the difference matters. A lot.

Not every workload being labelled as AI is going to drive agentic infrastructure requirements. Much of what’s currently being deployed is still generative inference — useful, commercially valuable, but manageable within existing infrastructure with targeted upgrades. The operators we worry about are the ones making large CapEx commitments based on projections that treat training demand, generative inference, and agentic inference as the same problem. They’re not. The risk profile, the thermal planning, and the siting logic are different for each.

The transition from lab to field is already underway. If your data center isn’t ready for the inference wave, it’s a bit like opening a brick and mortar bookstore just as broadband arrived. The content isn’t the problem. The delivery model is.

.ml.

Sources

- Gartner: Predicts 2025: Agentic AI Will Drive the Next Wave of Enterprise Automation — Gartner Inc., 2024

- McKinsey Global Institute: The State of AI in 2024 — McKinsey & Company, 2024

- Uptime Institute: Annual Global Data Center Survey 2024 — thermal density trends and liquid cooling adoption rates

- NVIDIA: Inference at Scale: Infrastructure Planning for LLM Deployment — NVIDIA Technical Brief, 2024

- IDC: Worldwide AI Infrastructure Forecast, 2024–2028 — IDC, 2024