As AI compute density goes vertical, thousands of existing facilities are discovering their infrastructure simply can’t keep up.

For decades, the data center industry operated on a predictable curve. A standard enterprise rack drew 5kW. A high-density one drew 10kW. Build a facility with raised floors and CRAH units, and you were set for a fifteen-year lifecycle.

That curve has become a vertical wall.

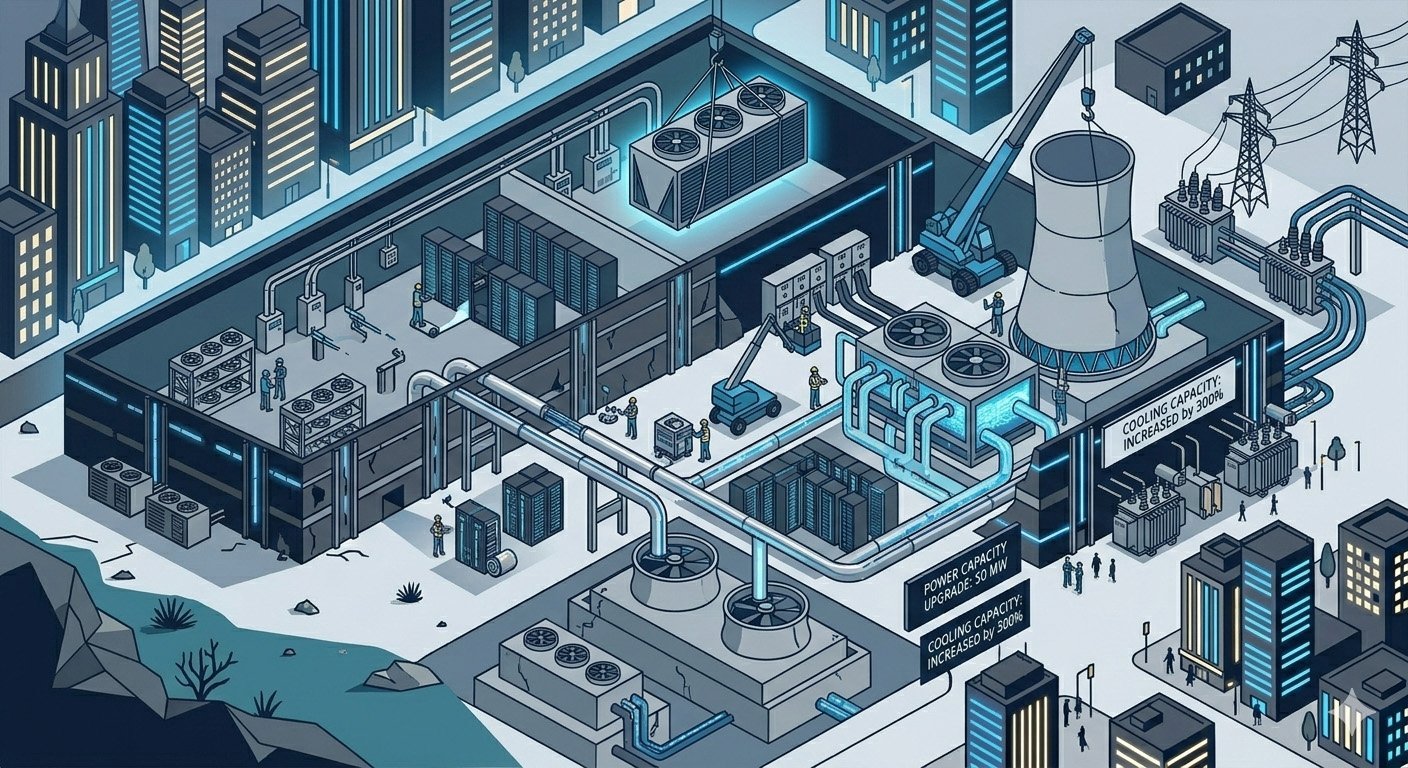

We’ve entered a period where thousands of existing data centers are physically and electrically incapable of hosting the next generation of high-performance compute. Yesterday’s infrastructure cannot meet tomorrow’s demand — and 2026 is the year that gap becomes impossible to ignore.

Does my rack look big in this?

The fundamental problem is concentration. In 2020, a data center suite might have been designed for an average of 150 watts per square foot. Today, AI clusters powered by NVIDIA Blackwell or AMD Instinct chips require upwards of 1,000 to 2,000 watts per square foot.

That’s not an incremental upgrade. It’s a different category entirely — and it demands a complete engineering rethink.

Take weight first. A fully loaded AI rack can exceed 6,000 lbs. Most legacy raised floors were rated for 2,500 to 3,000 lbs. To host modern AI, you don’t just need more power — you literally need a stronger building.

Then there’s the cooling ceiling. Air is a poor conductor of heat. Once a rack exceeds 30kW, you can’t move enough air through the chassis to keep the chips from thermal throttling — slowing down to stay alive. As much as sixty percent of legacy data center power distribution limits are now being exceeded by AI workloads, creating a stranded capacity problem where operators have megawatts available at the building entrance but can’t get that power to the rack or the heat out of the room.

Why 2026 is the tipping point

The first wave of purpose-built AI facilities — designed from the ground up for liquid cooling and gigawatt-scale loads — is coming online this year. For legacy operators, that changes the competitive landscape in a way that a price adjustment won’t fix.

You’re no longer competing with the data center down the street. You’re competing with AI factories built with 800V DC power distribution and direct-to-chip liquid cooling as the baseline. If your facility requires a tenant to bring their own cooling, or to derate their hardware to fit your airflow, you’ve crossed a line — from operator to legacy warehouse. Whether you’re ready to hear that or not.

The brownfield problem

Because lead times for new utility interconnections have stretched to four to eight years in major markets like Northern Virginia and Dallas, there’s a scramble to retrofit existing sites. But retrofitting is where most legacy disasters actually happen.

Bringing water to the rack requires a significant overhaul of mechanical piping — legacy sites weren’t built with the ceiling clearance for 6-inch headers or the floor space for coolant distribution units. Moving to rack-level 800V DC isn’t just a new PDU order. It often requires a complete rethink of UPS and transformer sequencing.

Our position at LoadLineData is that the answer for most operators in 2026 isn’t full-scale demolition — it’s surgical retrofitting. Carving out high-density AI pods within a legacy hall, isolating the high-heat, high-weight racks with dedicated liquid loops, while leaving the rest of the facility air-cooled for the traditional enterprise workloads that still pay the bills. Preserve what works. Upgrade where it counts.

The repricing

As capacity tightens, how data center space is valued is changing. In the real estate era, you paid for square footage. Today, you pay for resource availability — measured in usable, deliverable power.

Occupancy rates for high-density ready infrastructure are projected to reach 95% by late 2026. If you have a facility that can genuinely handle a 60kW rack today, you’re holding the most valuable asset in the digital economy right now. If, however, you’re locked into the 5kW-per-rack era, a significant write-down on your valuation isn’t a future risk — it’s a present one.

The LoadLine Perspective

We don’t look at a data center as a building. We look at it as a thermal and electrical envelope — one that either fits the silicon being asked to run inside it, or doesn’t.

Our work in this area tends to land in three places. Auditing for stranded capacity — finding where the legacy design is leaving power on the table because of cooling or weight constraints that most operators haven’t fully quantified. Bridging the technical gap — translating what a hyperscaler actually needs into what’s achievable within your existing four walls. And future-proofing the commercial terms — making sure new Master Service Agreements account for the shift to liquid cooling and higher densities before those changes trigger OpEx spikes that weren’t in anyone’s model.

The infrastructure reset isn’t coming. It’s here. The facilities that adapt their physical envelope to the reality of the silicon they’re being asked to host will be the ones still relevant in five years. The ones that don’t will find out what stranded capacity actually costs.

.ml.

Sources

- Goldman Sachs Research: AI Infrastructure — The $1 Trillion Capex Cycle — Goldman Sachs, 2024 (data center occupancy projections and AI power demand)

- TrendForce: AI Server and Data Center Power Density Trends — TrendForce, 2024/2025

- TechArena: 8 Ways AI Will Rewrite Data Center Infrastructure in 2026 — TechArena, 2025

- Nautilus Data Technologies: Retrofitting Existing Facilities for AI Workloads — Nautilus Data Technologies, 2024